AI-driven fake content fuels information warfare

The new generation of information warfare, carried out through AI-supported fake content on digital platforms, is blurring the truth and pushing public opinion into deep uncertainty. According to experts, its aim is to undermine trust in reality.

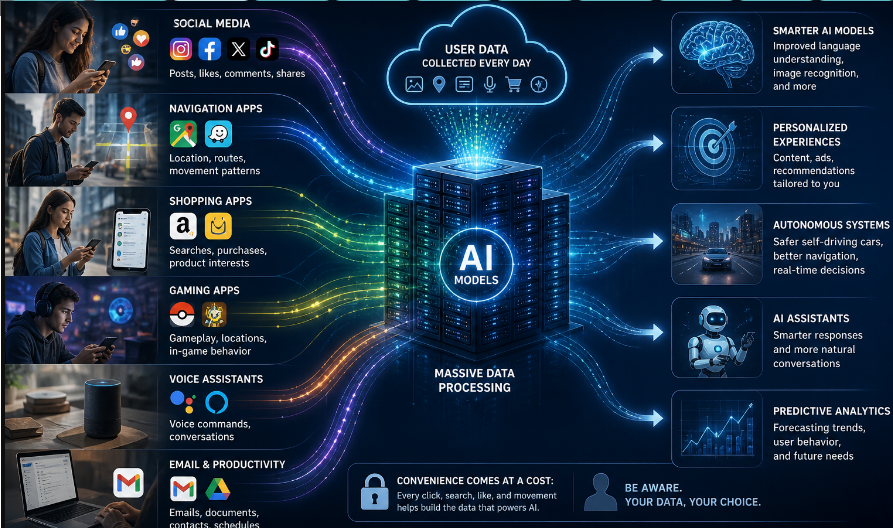

“Information warfare,” which refers to the use of information and communication technologies for perception management, is now largely conducted through AI-generated fake content, mostly on social media, as technology advances, CE Report quotes Anadolu Agency.

While it was previously carried out through mass media such as newspapers and television, in the digital age it has evolved into a new dimension through social media and AI-generated fake content.

This situation renders traditional propaganda methods less effective, leading to the blurring of truth and uncertainty about sources.

In this sense, social media has become the “digital front” of war, especially during times of conflict, while fake content undermines the foundation of truth.

According to experts, the rapid spread of “deepfake” and other fake content may aim not to make people believe a single false narrative, but rather to create general uncertainty about which information can be trusted.

False content may appear more dominant

Shahriar Kaisar, a lecturer from RMIT University, told Anadolu Agency that social media platforms play an important role in shaping public perception during conflicts, noting that many people follow these networks as alternative news sources.

Warning that misinformation can spread very quickly on these platforms, Kaisar said this may cause some inaccurate perspectives to appear “more dominant.”

“Since algorithms are generally designed to maximize user engagement, they can amplify certain narratives over others. This means that emotionally strong, polarizing, or visually striking content is more likely to be promoted,” he said.

Information warfare in the digital age

Kaisar pointed out that with digital tools such as AI and “deepfake,” it is possible to produce highly realistic but fake audio and video, tailoring propaganda content to specific targets.

He stated that during conflict periods, many governments try to shape and control public perception through social media platforms, spreading their own narratives to influence both national and international audiences.

Kaisar noted that AI-generated deepfake videos can further strengthen this effect:

“These tools can be misused to produce highly realistic content and spread it rapidly within communities, making it difficult for ordinary users to distinguish between truth and falsehood. While not all governments use these tools in the same way, they are increasingly becoming part of a broader information war.”

He emphasized that information warfare has changed significantly over the past 25 years in terms of speed, scale, and technology. In the early 2000s, the most effective campaigns spread through television, newspapers, simple websites, and emails. Today, social media platforms, messaging apps, and streaming services allow information to circulate globally almost instantly, while algorithmic feeds often prioritize sensational or polarizing content over accurate information.

Kaisar also noted that a much wider range of actors is now involved, including online communities and content creators.

Looking ahead, he said that in the next 10 years, information warfare could become an important part of traditional military operations, adding that deepfake and similar fake content may become so widespread that the goal will be less about convincing people of a single false story and more about creating general uncertainty about what can be trusted.