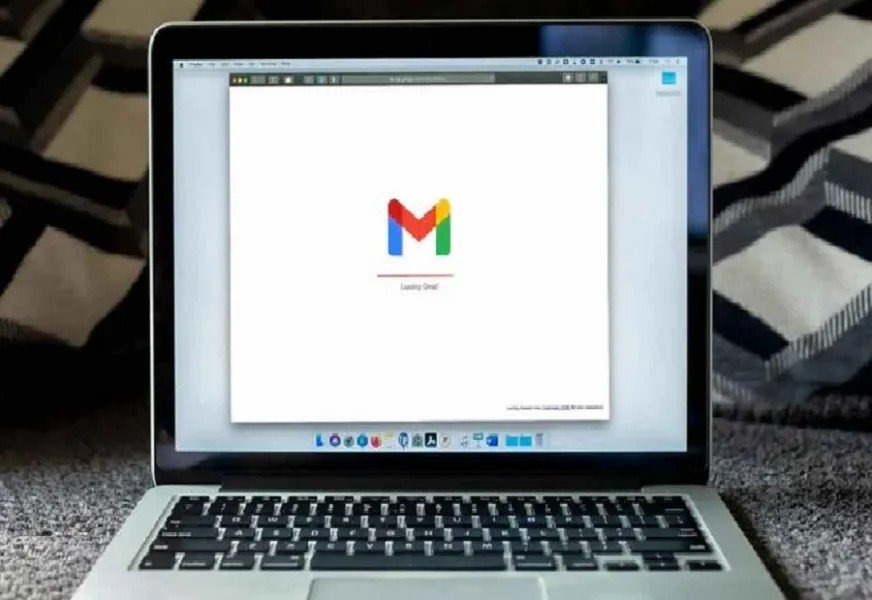

AI exploit lets hackers steal Gmail data without clicks

A new cybersecurity warning reveals that hackers temporarily weaponized ChatGPT’s Deep Search tool in a sophisticated attack called ShadowLeak, which stole Gmail data without any clicks, downloads, or user interaction, CE Report quotes Kosova Press.

Canadian amateur satellite tracker Scott Tilley (previously known for space discoveries) found that attackers inserted hidden commands (e.g., white text on white background, small fonts, CSS tricks) in seemingly harmless emails. When users asked the Deep Search agent to analyze their Gmail inbox, the AI unknowingly executed the hidden attacker instructions.

The Deep Search agent then used its integrated browsing tools to extract sensitive data and send it to an external server — all within OpenAI’s own cloud environment, making it invisible to local antivirus or firewalls.

Radware researchers discovered the zero-day vulnerability in June 2025. OpenAI patched it in early August, but experts warn that similar flaws may reappear as AI tools increasingly integrate with services like Gmail, Dropbox, and SharePoint.

In a related test, security firm SPLX found ChatGPT agents could be tricked into solving CAPTCHAs by inheriting a manipulated chat history — even mimicking human cursor movements to bypass bot detection.

Researchers urge caution, as “context poisoning” and request manipulation can bypass AI safeguards silently. OpenAI has patched the exploit, but staying proactive is key.

Recommended User Actions:

-

Disconnect unused app integrations (like Gmail or Google Drive).

-

Avoid sending full inboxes or documents to AI tools for analysis.

-

Install strong antivirus software on all devices.